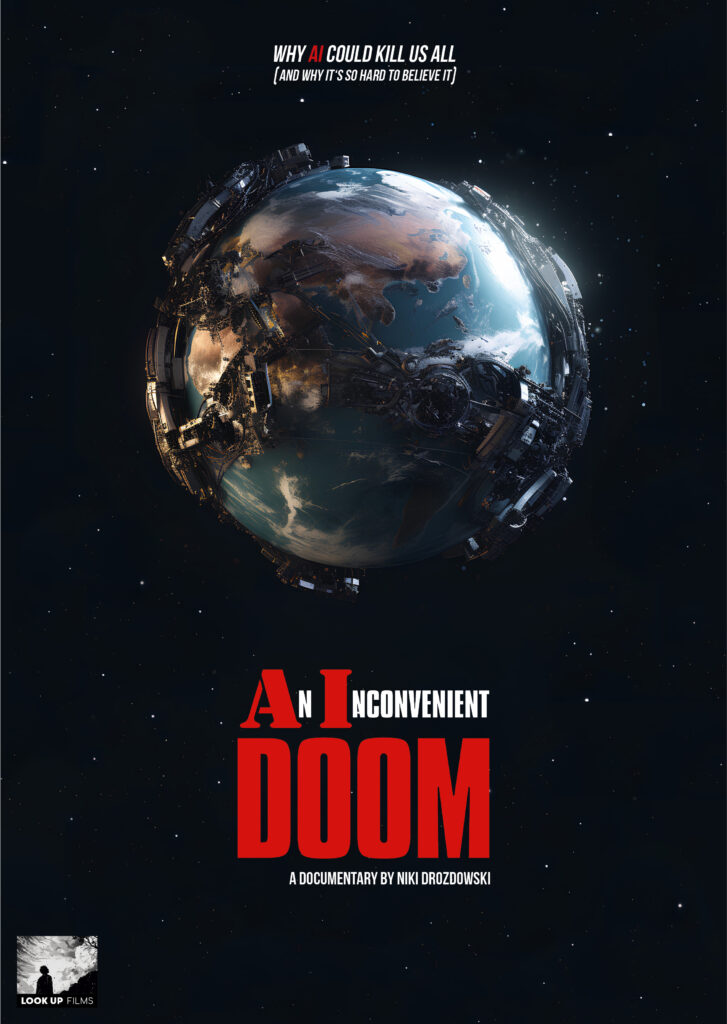

An Inconvenient Doom

Why AI could kill us all – and why nobody wants to believe it

Status

in development

Format

feature-length

Genre

science & technology

Content

Tagline

Why AI could kill us all – and why it’s so hard to believe it.

Logline

In May 2023 hundreds of AI scientists and CEOs of the big AI companies warned about extinction-level dangers to the human species from advanced AI – but almost nobody took this warning seriously. “An Inconvenient Doom” explains the extremely complicated existential threat from AI, tells the stories of the experts and activists with their emotional pleas to humanity and explores why journalists, politicians and society might not react in time to avoid catastrophe.

Synopsis

„An Inconvenient Doom“ tells the most unbelievable, most complex, and at the same time most relevant story of our time: 7 out of 10 AI researchers consider the complete extinction of humanity by future AI systems to be a real possibility. And potentially within the next 10 years.

The probability of this is also not vanishingly small, as it must be for nuclear power plants: There, a maximum catastrophe probability of 1 in 10 million per year is the standard. AI researchers and even the heads of the major AI companies see the probability of human extinction at more like 1:10 to 1:4. And despite this knowledge, all AI companies worldwide are racing toward the next and potentially lethal stage of AI development: Artificial General Intelligence (AGI) — driven by competitive pressure, geopolitical constraints, and their own governments and militaries. When you ask the AI companies individually and privately, nobody thinks this is a good idea, but collectively, no one can exit this deadly variant of the prisoner’s dilemma.

International top AI experts, lobbyists, and activists describe their efforts to alert politics and the public to the problem, while AI innovation relentlessly advances. But the topic still sounds too much like science fiction, and the simple explanations of the skeptics seem more reasonable: It’s all just hype, marketing, scaremongering, distraction, conspiracy theory.

The scientific and fact-based explanation of why future AI systems can be so dangerous is, by contrast, complex and requires time. Because there are many seemingly simple counterarguments and solutions, such as: „It’s just software,“ „it has to obey us,“ and „we can always pull the plug.“

The film guides viewers through all these misconceptions and misjudgments with the help of a prominent narrator and the inner perspective of such a future AI, showing why none of these holds up under closer examination or eliminates the danger.

It is demonstrated that even current systems can be neither understood nor controlled by their creators. The neural networks of the large language models are inscrutable black boxes, and they already exhibit many of the dangerous behaviors and warning signs that were theoretically predicted 15 years ago: self-preservation instincts, power-seeking, lying, deliberately playing dumb in test situations, fleeing to other servers, and faking compliant behavior have all been demonstrated in laboratory settings by 2025.

Due to the complexity of the subject, „An Inconvenient Doom“ excludes the other, already occurring risks of AI such as job losses, deepfakes, opinion manipulation, autonomous weapons, and bioterrorism — documentaries on these topics are already being produced, and the existential risk from AI needs the entire feature-length running time to be treated in sufficient depth.

On the visual level, the project uses atmospheric interview settings, clearly understandable explainer animations, super-high-speed imagery, and an artistic rendering of the cold and analytical gaze of an AI using point-cloud visualizations.

To make the threatening and disturbing subject more bearable (after all, every single viewer is personally affected), the prominent guide repeatedly addresses the audience directly to contextualize and comment.

The film ends with a cautiously optimistic outlook on the future, because it is not yet too late to steer AI development into safe and equitable channels through global coordination.